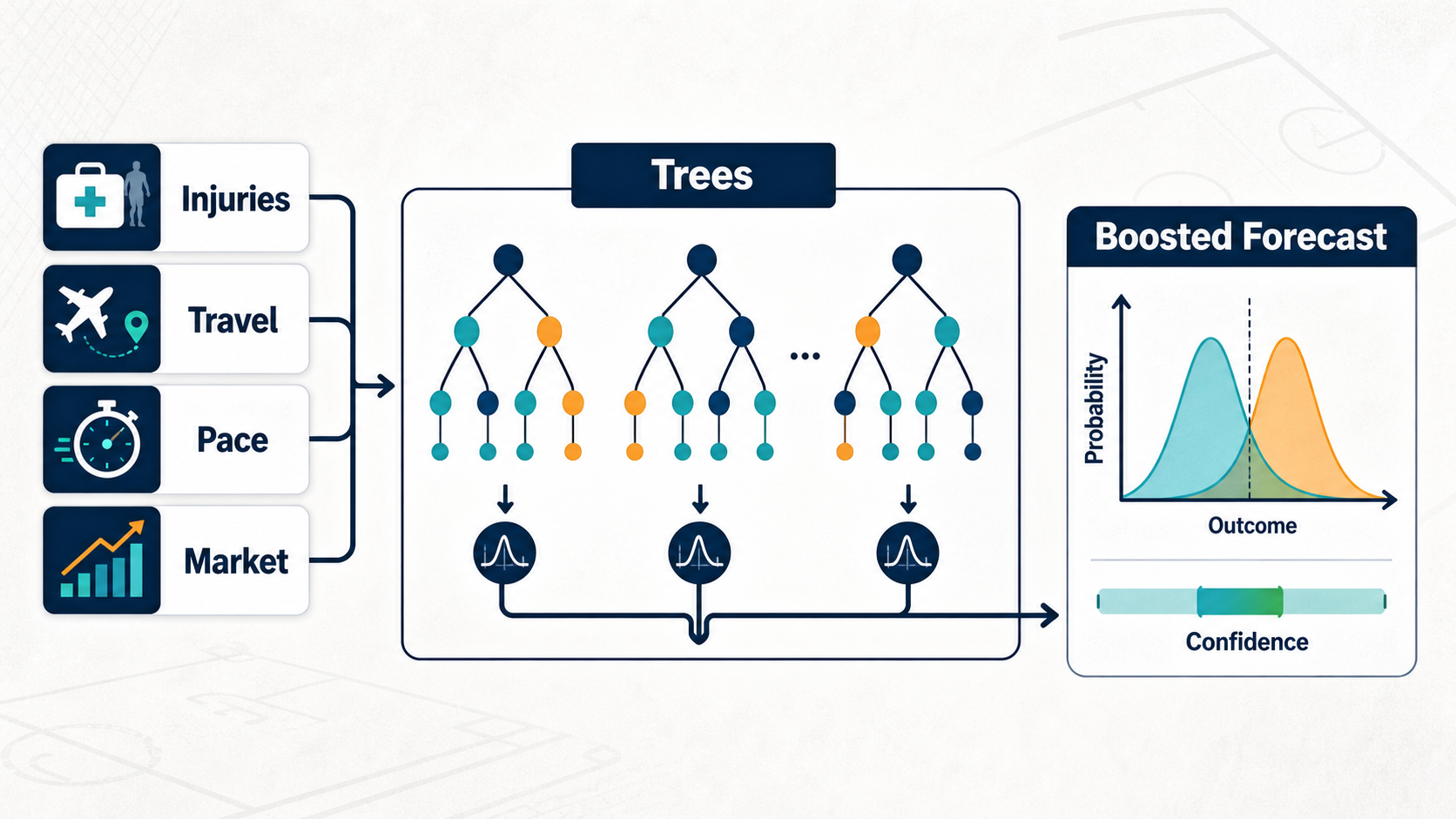

Can a model learn that an NFL injury matters differently when travel, pace, opponent, and market context all point the same way? That is the practical question behind this method family. Tree models split data into branches. Instead of assuming one smooth line, they ask a series of sports questions: is the quarterback healthy, is the pace high, is the opponent weak against the run, did the market move, and what happened in similar branches before?

The plain-English version

Tree models split data into branches. Instead of assuming one smooth line, they ask a series of sports questions: is the quarterback healthy, is the pace high, is the opponent weak against the run, did the market move, and what happened in similar branches before?

The novice trap is to treat the method name as magic. The useful move is to ask what information the method can learn, what it cannot learn, and what kind of sports question it is actually built to answer. A method that is excellent for ranking team strength can be poor for a single player prop, and a method that wins a backtest can still be unbettable if the edge appears only after the market has moved.

Start with the target. A spread model, moneyline model, player prop projection, DFS lineup optimizer, and fantasy ranking all answer different questions. Then check the timestamp of every feature. If the feature would not have been known before the bet, contest lock, or lineup decision, it does not belong in the model. Finally, compare the output to the right benchmark: the closing line, the posted prop, the field ownership, or the best available projection.

Method-by-method guide

random-forest

A random forest averages many decision trees trained on varied samples and feature subsets to reduce overreaction to one branch. In sports terms, this is the part of the model that decides how to translate noisy pre-game inputs into a usable betting, fantasy, or DFS signal instead of a loose opinion.

Where it helps: For NFL cover modeling, it can combine injuries, travel, pace, and market features without forcing one linear relationship. The practical test is whether the block improves decisions on games it has not seen, not whether it explains last night's box score after the answer is known.

Where it fails: It can smooth away rare but important edges and can still overfit if duplicated games or leaky features enter the forest. The fix is usually cleaner targets, stricter time cuts, a smaller feature set, or a calibration layer before the output reaches a staking or lineup workflow.

extratrees-classifier

Extra trees add more randomness to tree splits, which can reduce variance and test whether a signal is robust. In sports terms, this is the part of the model that decides how to translate noisy pre-game inputs into a usable betting, fantasy, or DFS signal instead of a loose opinion.

Where it helps: It helps when an NFL dataset has many noisy interaction candidates and the builder wants a less brittle classifier. The practical test is whether the block improves decisions on games it has not seen, not whether it explains last night's box score after the answer is known.

Where it fails: It may underfit sharp thresholds, such as a key injury threshold, because the splits are intentionally more random. The fix is usually cleaner targets, stricter time cuts, a smaller feature set, or a calibration layer before the output reaches a staking or lineup workflow.

gradient-boosting

Gradient boosting builds trees sequentially, with each new tree correcting errors made by the prior trees. In sports terms, this is the part of the model that decides how to translate noisy pre-game inputs into a usable betting, fantasy, or DFS signal instead of a loose opinion.

Where it helps: It can learn layered NFL patterns where injury context, travel, pace, and market number interact in specific ways. The practical test is whether the block improves decisions on games it has not seen, not whether it explains last night's box score after the answer is known.

Where it fails: It can chase noise if the learning rate, depth, and early stopping are not controlled on a true walk-forward split. The fix is usually cleaner targets, stricter time cuts, a smaller feature set, or a calibration layer before the output reaches a staking or lineup workflow.

xgboost

XGBoost is a regularized boosting implementation known for strong tabular performance and careful control of tree growth. In sports terms, this is the part of the model that decides how to translate noisy pre-game inputs into a usable betting, fantasy, or DFS signal instead of a loose opinion.

Where it helps: It is useful when a sports dataset has many engineered features and the builder needs strong ranking power. The practical test is whether the block improves decisions on games it has not seen, not whether it explains last night's box score after the answer is known.

Where it fails: It can produce impressive backtests that disappear live if the feature set leaks market movement or lineup status. The fix is usually cleaner targets, stricter time cuts, a smaller feature set, or a calibration layer before the output reaches a staking or lineup workflow.

lightgbm-classifier

LightGBM is a fast gradient boosting classifier that can handle large tabular datasets efficiently. In sports terms, this is the part of the model that decides how to translate noisy pre-game inputs into a usable betting, fantasy, or DFS signal instead of a loose opinion.

Where it helps: It helps when testing many NFL, NBA, or MLB features across seasons and needing rapid iteration. The practical test is whether the block improves decisions on games it has not seen, not whether it explains last night's box score after the answer is known.

Where it fails: Its speed can encourage careless feature searches, which raises the risk of tuning to the validation window. The fix is usually cleaner targets, stricter time cuts, a smaller feature set, or a calibration layer before the output reaches a staking or lineup workflow.

catboost

CatBoost is a boosting method with strong support for categorical variables and ordered training tricks. In sports terms, this is the part of the model that decides how to translate noisy pre-game inputs into a usable betting, fantasy, or DFS signal instead of a loose opinion.

Where it helps: It can help when sport categories like team, venue, surface, or role matter and need less manual encoding. The practical test is whether the block improves decisions on games it has not seen, not whether it explains last night's box score after the answer is known.

Where it fails: It can still memorize category identity if the categories are too close to the outcome or too sparse. The fix is usually cleaner targets, stricter time cuts, a smaller feature set, or a calibration layer before the output reaches a staking or lineup workflow.

adaboost

AdaBoost combines weak learners by focusing more weight on examples the prior learners missed. In sports terms, this is the part of the model that decides how to translate noisy pre-game inputs into a usable betting, fantasy, or DFS signal instead of a loose opinion.

Where it helps: It can expose whether a simple sports classifier improves when hard-to-classify games receive more attention. The practical test is whether the block improves decisions on games it has not seen, not whether it explains last night's box score after the answer is known.

Where it fails: It can be sensitive to mislabeled or noisy games because those mistakes receive extra weight. The fix is usually cleaner targets, stricter time cuts, a smaller feature set, or a calibration layer before the output reaches a staking or lineup workflow.

Sports walkthrough

For an NFL cover model, trees can split on injuries, travel, pace, rest, opponent strength, and market data. One branch might describe a rested home favorite with positive injury news. Another might describe a traveling underdog in bad weather with a stale line. Boosting then builds many trees that correct one another, while forests average many trees to reduce variance.

Concrete names keep the model honest: Justin Herbert can change passing efficiency branches, Jonathan Taylor can alter run-heavy game script branches, and the Bills can create market branches when public and sharp action disagree. Those examples are not there to imply a pick; they force the workflow to deal with real role changes, injury context, usage shifts, opponent quality, and market reaction instead of abstract rows in a table.

The workflow is deliberately boring. Define the event, gather only pre-decision information, produce a projection or probability, compare it with the market or contest environment, size the action conservatively, and then record what happened. When the number closes, the closing price becomes the first audit. When the game finishes, the outcome becomes the second audit. Over a useful sample, both audits matter more than whether one bet won.

Validation workflow

Validate this method family in the same shape it will be used live. Train on older games, tune on a later slice, and reserve the newest window for the final check. If the method uses player props, keep player identity, team context, injury status, and market number aligned to the timestamp when the decision would have been made. If it uses DFS simulations, lock the slate, salary, ownership, and injury assumptions before grading lineups.

Compare against a plain benchmark before celebrating lift. A model should beat a naive average, a market-only view, and a smaller interpretable version before the extra complexity deserves product space. The important comparison is not whether the method can explain the past; it is whether it improves decisions after fees, vig, contest rake, stale lines, and real lineup constraints are included.

Review failures as carefully as wins. A losing pick that beat the close can still be a useful process signal, while a winning pick that took a bad number can be a warning. Group errors by sport, market, player role, team, confidence bucket, and price range so the builder can tell the difference between normal variance and a broken assumption.

Expert notes

Tree models handle nonlinear interactions well, but that strength can become overfitting. They will gladly learn schedule quirks, duplicated games, or one-off injury clusters if validation is weak.

Feature importance in boosted trees is not always causal. A market feature can appear important because it absorbs many other signals, not because the model discovered an independent edge.

Categorical handling differs by implementation. CatBoost can be strong on categories, but identity-like categories still need careful validation so the model does not memorize teams or players.

Use probability calibration after tree models. Boosted classifiers often rank outcomes well but output probabilities that are too sharp for betting.

When not to use this family

Do not use a method just because it is more advanced than a baseline. If the data is thin, the target is unstable, the sport context changed, or the market already absorbs the signal, a simpler model with better validation is usually the better tool. The warning sign is a model that needs a long explanation for why its live results should be ignored.

Watch for leakage, repeated samples, and hidden correlation. A player prop model can accidentally learn same-game information through closing lines, a DFS optimizer can double count teammate correlations, and a ratings model can overstate certainty after one noisy result. If a method cannot survive a walk-forward split, a holdout season, and a calibration check, keep it in research.

Decision checklist

| Modeling question | Useful block | Risk check |

|---|---|---|

| What is the cleanest baseline for this sports decision? | random-forest | Confirm the target, feature timestamp, and market comparison are all aligned before training. |

| Which block adds lift without turning noise into confidence? | adaboost | Compare walk-forward performance, calibration, and closing-line value before trusting the output. |

How Shark Snip uses it

Shark Snip uses random-forest, extratrees-classifier, gradient-boosting, xgboost, lightgbm-classifier, catboost, and adaboost when nonlinear sport interactions deserve more capacity than a linear baseline.

The block names above are intentionally visible in this article so model builders can connect the concept to the actual building blocks in Tinker, DFS simulation, and the model marketplace. Shark Snip treats these methods as components in a workflow: feature preparation, model fit, probability repair, portfolio construction, and post-game evaluation. No block is allowed to skip validation because every sport has small samples, changing incentives, and noisy injury information.

The most useful model is not the one with the most intimidating name. It is the one whose assumptions match the sport question, whose inputs were available at decision time, whose output is calibrated enough to compare with a price, and whose failures are visible before real bankroll or contest exposure is increased.

Related reading and tools

Keep going with building your first model with Tinker, closing-line value, bet tracking. These links connect the method family to the betting, DFS, and model-building workflows readers already use.

Named modeling examples

A model page is more useful when the feature examples are concrete. Josh Allen rushing attempts, Ja'Marr Chase target share, Nikola Jokic assist rate, Tarik Skubal strikeout projection, Igor Shesterkin starter confirmation, and Islam Makhachev control time are all different prediction problems. A single “player form” feature cannot explain them all, so the model needs sport-specific inputs and review notes.

- NFL: separate route participation, pressure rate, and red-zone role from box-score volume.

- NBA: separate usage, minute projection, pace, and back-to-back fatigue.

- MLB: separate starter skill, handedness, park, weather, and lineup confirmation.

- NHL and UFC: late confirmations and fight-week news can matter more than a season average.

Model inputs worth naming

Use names as evidence, not decoration. The useful SEO win is that Justin Herbert, Jonathan Taylor, Josh Allen, Ja'Marr Chase and Bijan Robinson and Bills, Chiefs, Eagles and Lions appear inside decisions, thresholds, and internal links instead of being dumped into a keyword list.

- NFL model: route participation for Ja'Marr Chase, rushing attempts for Josh Allen, pressure rate allowed by the Bengals, and red-zone carry share for Jonathan Taylor should be separate features.

- NBA model: usage, projected minutes, rest, and pace should move Nikola Jokic or Shai Gilgeous-Alexander props differently than a one-number power rating.

- MLB model: Tarik Skubal strikeout projection, Coors Field park factor, lineup confirmation, and bullpen rest need their own columns.

- Review loop: grade entry price, closing price, bet result, and model error separately so lucky results do not hide bad forecasts.

Build or audit the workflow in Tinker and review it with CLV.

Educational analysis only, not a bet recommendation. Model outputs can be wrong, markets move, and sports data can contain injuries, role changes, reporting gaps, and contest-specific constraints.